The Alchemy of Unlike Minds

In the early 1990s, I was negotiating the acquisition of a state business in Shanghai, before it was all the rage. The tension in the room on The Bund boiled down to one word: escrow. The convention we use in the West to deal with asset-based purchases, to cope with dynamic work in progress. The problem was that there was no parallel Chinese convention. For them, it signalled distrust. We resolved it only because I had spent a long time building a relationship with the people I was working with, and they were prepared to trust me rather than the company. It was an uncomfortable time because my trust in my corporate leadership was considerably less than my trust in the people across the table in Shanghai. My masters dealt in numbers. The Chinese worked in relationships. The gap between them was what I would now recognise as mētis, the “cunning intelligence” that sits in between what we can measure.

There was no right or wrong, just difference. Until we could find a way to establish common ground, all the different parties could do was look for someone to blame. That makes being the one in the middle uncomfortable, but critical. There is real value in evidence-based judgement, but it is incomplete in the same way that depending entirely on relationships is incomplete. Each needs the other for completeness and resilience under pressure.

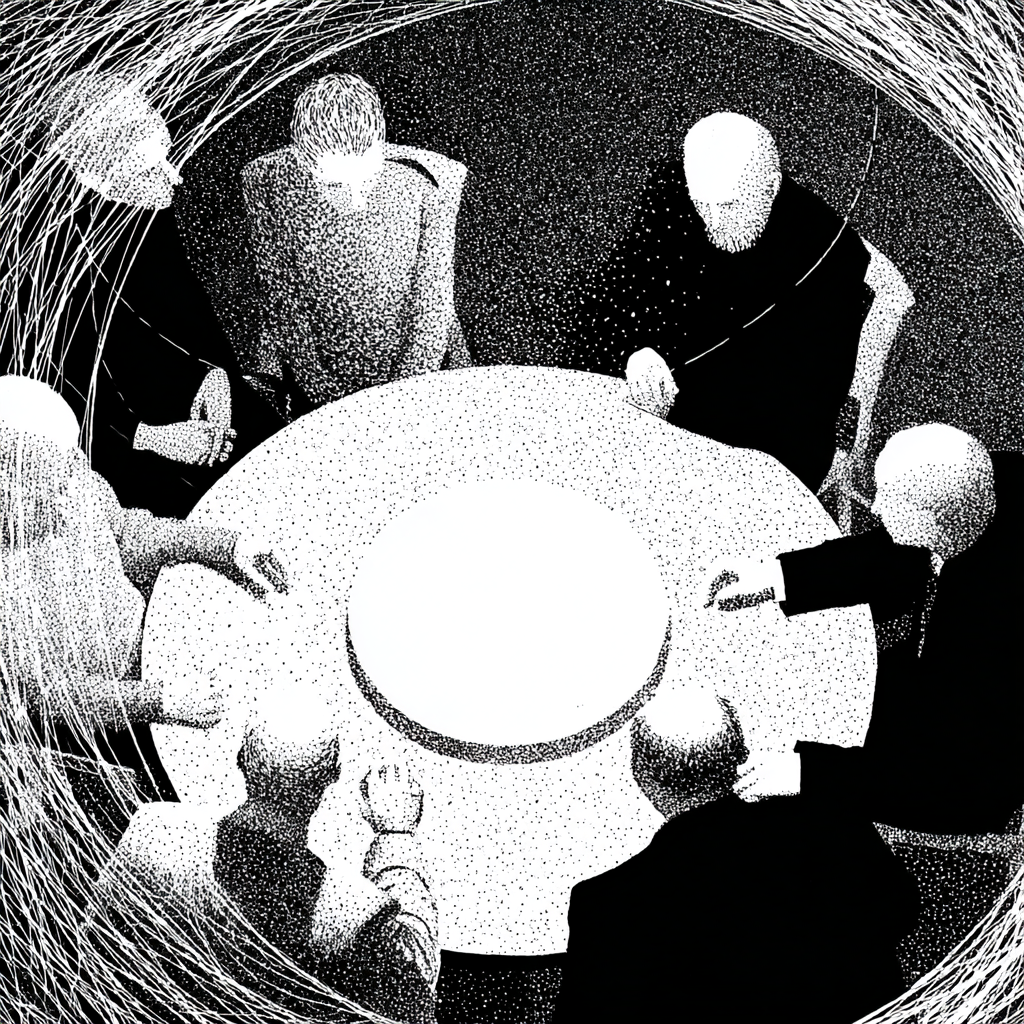

Working at the boundaries of AI feels very similar. The fundamentalists on both sides, efficiency, productivity and profit on one side, human values on the other, are making all the noise. Neither are wrong, but neither are listening. The result is a complex mess driven by the defence of what is known, rather than curiosity and openness to what is not. One of the biggest challenges in working at the edge of things is dealing with real differences of language, culture, worldviews and values, and finding ourselves struggling to reconcile the seemingly irreconcilable. It has been a consistent theme of my work for decades, but never has it felt quite as ubiquitous as it does now. Sitting in the tension is hard work, but when we can manage it, the meeting of unlike minds has an alchemy to it.

David Pye's distinction between the workmanship of certainty and the workmanship of risk gives one way of naming what is at stake. Bill Sharpe's Three Horizons framework gives another; the chasm between what we know we are doing in Horizon One and the uncertainty of Horizon Three is precisely the territory where neither camp's tools are sufficient on their own. Iain McGilchrist's work on the difference between attending to the whole and attending to the parts has given me a framework I return to constantly. In recent years I have developed a profound respect for diplomats, who work in all these spaces simultaneously, managing boundaries and continuing to function despite the pressures exerted by those for whom life is simpler when they can take refuge in the familiar. What these frameworks share is a recognition that the space between unlike ways of knowing is not a problem to be eliminated. It is where the serious work happens.

AI is an unlike mind.

I think we have to put as much effort into developing a relationship with it as I had to in those endless meetings over three years in Shanghai, because when we hit unexpected challenges — and we will — we will need those relationships to hold. What does it actually mean to build a relationship with a mind that has no continuity, no memory of yesterday's conversation, no stake in the outcome? That tension is not a technical problem waiting for a software solution. It is the human problem of working with genuine otherness, which is something we have always found difficult and have never been able to shortcut. The assumption that those fluent in the logic of what created AI therefore understand its implications and its second and third order consequences strikes me as, at best, hubristic, and at worst, dangerous.

That does not mean either side can be discounted, that those arguing for the primacy of human relationships as the anchor for AI are simply right. Technology has always required us to adapt, from language to our first mark-making, and I cannot see AI as any different, other than in the speed at which it will impact us.

AI shows no signs of patience and is accelerating the evidential side of the equation at exponential rates. This creates an imbalance, and I think we need to address our relationship with what data does not understand if we are to make what is happening a genuine source of human growth.

Perhaps the most significant challenge is what I think of as a cognitive illusion. AI is very good at parsing, reassembling, and playing with what we know, but it cannot peer into what we do not. The difficulty is that nobody has told it that, and it is easy under pressure, when AI has become such an important part of our lives, to assume that it can. AI is very good at Horizon One but has no conception of Horizon Three. The bridge between the two, the emergent, uncertain territory of Horizon Two, is our job. We can probably automate much of the workmanship of certainty, but none of the workmanship of risk. It can feed us options but it cannot negotiate. The real impact of AI, I suspect, is that it reminds us of the things we are capable of but have stopped doing. Exploring the space between things needs the constructive conflict of unlike minds, not memetic repetition.

The tension we are holding here is as old as time. We are dealing with polarities where two opposing sides each believe they are right and the space in the middle is genuinely difficult to occupy. Adam Kahane writes about it in his work on power and love, the situation where we lack the power to overcome, the willingness to accept, and the ability to leave. McGilchrist frames it as the mental experience of sitting with uncertainty while the parts-focused mind demands resolution. These are local versions of challenges that are not local at all but global, from climate change to inequality to exhausted economic models. This, if we play it intelligently, works in our favour. The easiest way to reconcile warring parties has always been to introduce an external threat that unites them, and if climate change, inequality and aspiring hegemons do not qualify as that, I am not sure what does.

It is why I find alchemy as a description, rather than a metaphor, so useful. It is the workmanship of risk rather than certainty, following a path we believe will work, but which could fail at any point if we do not pay attention to what is happening around us. It is the fast feedback of the OODA loop and the discipline of mindfulness as we distil new heuristics for the change we face. There are no guarantees, and nobody is coming to rescue us. It is the knowledge that we have the capability if we can exercise the will.

So what does it actually look like to hold this tension in practice, rather than simply naming it?

In Shanghai, it looked like three years of meals, conversations and small acts of reliability before a single document was signed. The escrow problem was not solved by finding a better legal mechanism, it was dissolved by the quality of the relationship that had been built around it. The numbers people in London never fully understood what had happened or why, because what had happened was not in the numbers. It was in the accumulated weight of presence, of turning up, of being someone the people across the table had learned they could trust even when it was inconvenient to do so.

The Buurtzorg nursing model in the Netherlands offers a more recent version of the same principle at an institutional scale. By dismantling the layers of process compliance and metric-driven management that characterised conventional care, and returning decision-making to small teams of nurses working directly with patients, they achieved thirty per cent higher patient satisfaction at forty per cent of the authorised care hours of traditional providers. Their overhead runs at eight per cent against a competitor average of twenty-five. This is not soft. This is what happens when you trust the people closest to reality to exercise their judgement rather than comply with systems designed to manage institutional anxiety. The mētis was there all along. The institution had simply built structures that prevented it from operating.

The alchemists understood this problem. They were not solitary eccentrics. They worked in small, serious communities they calleded the sodalitas, bound not by institution or credential but by shared commitment to a problem that could not be solved from the outside. They wrote to each other across Europe with remarkable precision about what they had observed at the furnace, what had failed, and what the material had told them when it refused to behave as expected. Their literature was deliberately obscure, not to exclude but because they understood something that took philosophy several more centuries to name properly: the knowledge they were after could not be transmitted to someone who had not already begun the work. It was readable only from inside the practice. What they developed, over years, was not a methodology but a discipline of attention, the capacity to read failure as information, to sit with a material that was changing in ways they could not yet name, to distinguish the rhythm of the work from the impulse to conclude it prematurely.

Paracelsus (1493-1541) was a Swiss physician and alchemist who burned the works of the ancient medical authorities in public, insisted that knowledge belonged to practitioners rather than scholars, and spent his life travelling Europe learning from miners, healers and craftspeople. His central conviction was that the practitioner's own character was a variable in the work: greed, impatience and the desire for quick results did not merely reflect poor attitude but produced, structurally, a different and inferior quality of observation. The vessel mattered as much as the material. The heat had to be sustained at the right temperature over a long period. And the work began not when you understood it but when you started.

In many respects, the alchemists were heretics. Across five centuries, a particular kind of figure recurs with striking consistency: the practitioner-heretic, whose transgression is not primarily political or theological but epistemological. Da Vinci dissecting corpses illegally because he needed to know how bodies actually worked. Faraday, the blacksmith's son with no university education, seeing relationships between phenomena that trained mathematicians had missed because his eye had never been disciplined into their blind spots. McClintock befriending her corn plants and waiting thirty years for a Nobel Prize that finally confirmed what her quality of attention had shown her decades earlier. What connects them is not rebellion for its own sake but a particular orientation toward knowing: they trusted what they found over what they had been told, they developed their understanding through sustained, embodied engagement with their material, and they paid the price that institutions reliably exact from those whose evidence contradicts the identity of those who would need to act on it. Their heresy was, in every case, a form of fidelity — to the thing itself, to the work, to what the material was actually showing them.

The evidence for the power of unlike minds is not merely felt. Scott Page, a complexity scientist at the University of Michigan, has spent two decades demonstrating mathematically what the alchemists understood practically: that unlike minds working on a genuinely complex problem outperform groups of similar experts, not despite their differences but because of them. When no single perspective can hold the whole problem, you want people getting it wrong in different ways. The diversity is not the difficulty to be managed. It is the capability. His Bletchley Park example makes this concrete: the team that cracked the Enigma code drew on mathematicians, engineers, linguists, historians, crossword puzzle experts and classical scholars working together, and it was precisely their cognitive differences that made the breakthrough possible. No single discipline could have reached it alone.

But the research is equally unambiguous about the conditions required. Unlike minds only produce their particular alchemy in environments where people feel safe enough to bring what is genuinely different about them into the room rather than leaving it at the door. That does not happen by accident or by announcement. It happens through the slow accumulation of trust that comes from showing up, from being present through difficulty, from demonstrating over time that the differences are not merely tolerated but actually needed. This is what I was attempting in Shanghai over three years of meetings, and it is what the sodalitas, at its best, is designed to make possible. Not a room of people who agree, but a vessel strong enough to hold people who do not, for long enough that something none of them could have reached alone becomes possible.

This is where we find ourselves. In the space between an unacceptable old and an unfamiliar new, the only way to discover what possibility looks like is to engage with it. There is no uniform way to do it. We will each find our own path, but we will find it better in a community of practice that shares what it has found, challenges what it has been taught to accept, and experiments with what it does not yet understand. From AI to ways of working and everything beyond.

The space that neither side can name is not a compromise. It is where the work actually happens.

Evolving Experiments...

- What does "right brain" AI look like?

- Creating offline "Sandboxes" for experiments

- The Power of Play in Uncertainty

- What do we really want from "work"

More on this in the next couple of weeks......

Comments ()